Overfitting and Underfitting

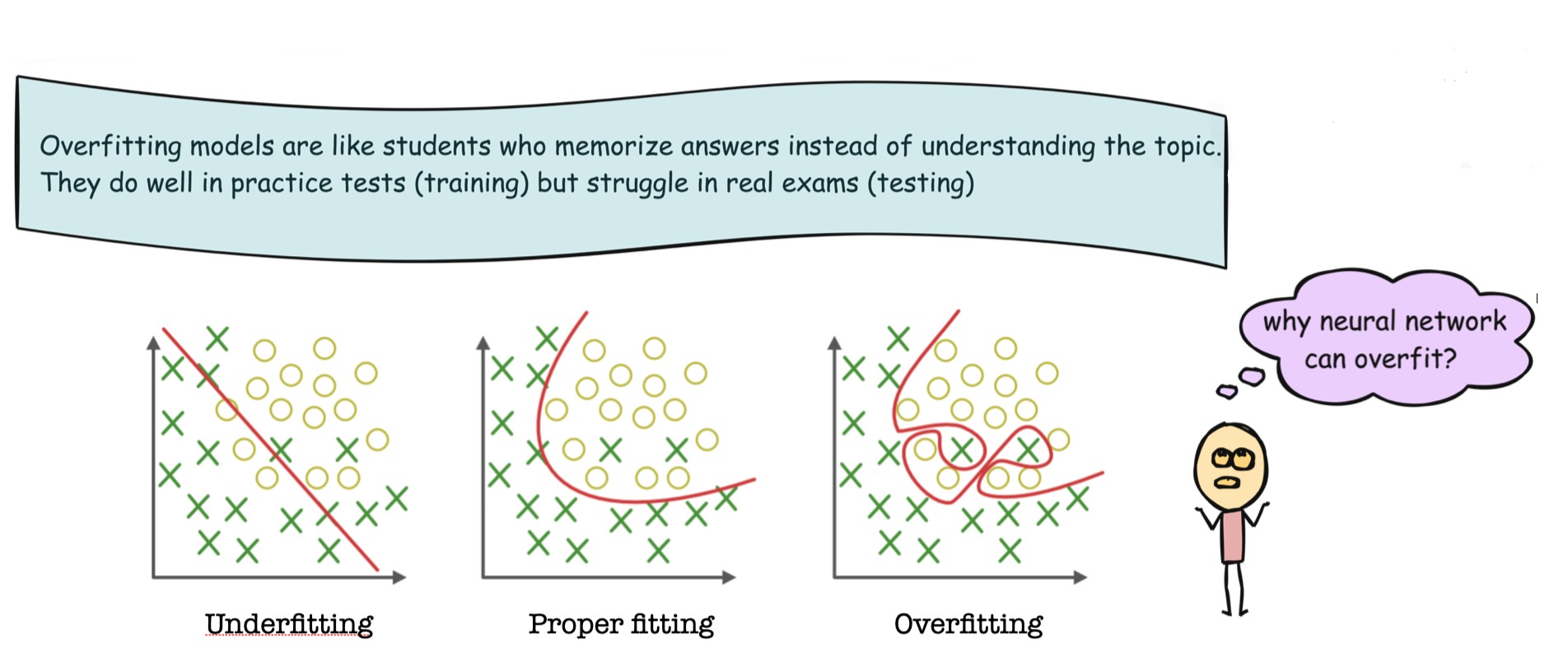

When training machine learning models, our ultimate goal is generalization — performing well not just on training data, but also on unseen test data. Two common problems that prevent this are underfitting and overfitting.

A model fits data by learning patterns from training examples. The quality of this fitting determines how well the model performs on new data.

What Is Underfitting?

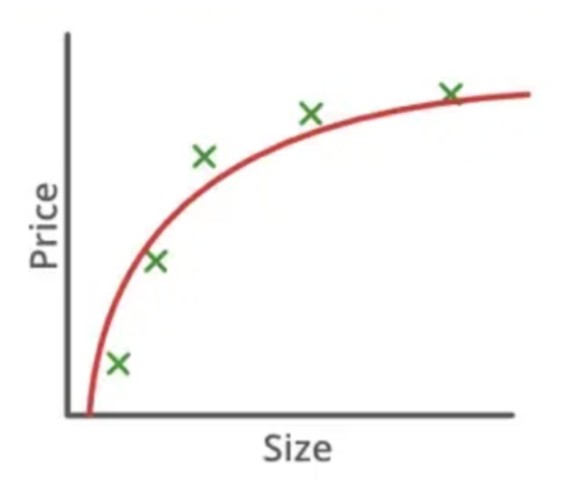

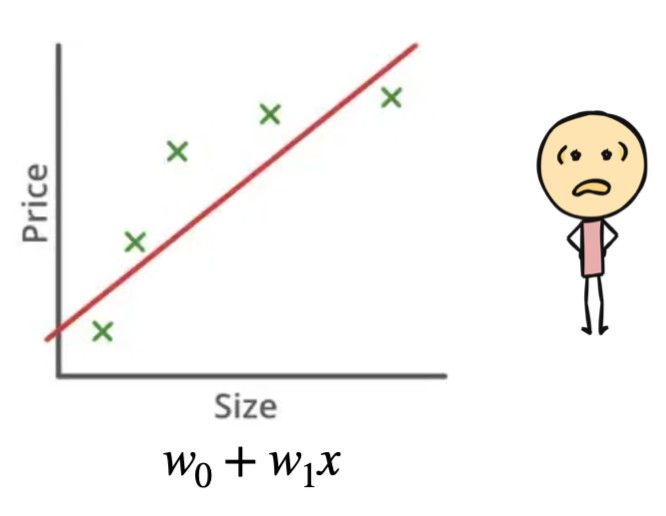

Underfitting occurs when a model is too simple to capture the underlying structure of the data.

- Performs poorly on both training and test data

- High bias, low variance

- Cannot represent complex patterns

- High training error and high test error

The model fails to learn even the basic trend in the data. It makes strong assumptions that do not hold true.

Examples

- Linear regression applied to non-linear data

- Very shallow decision trees

- Neural networks with too few layers or neurons

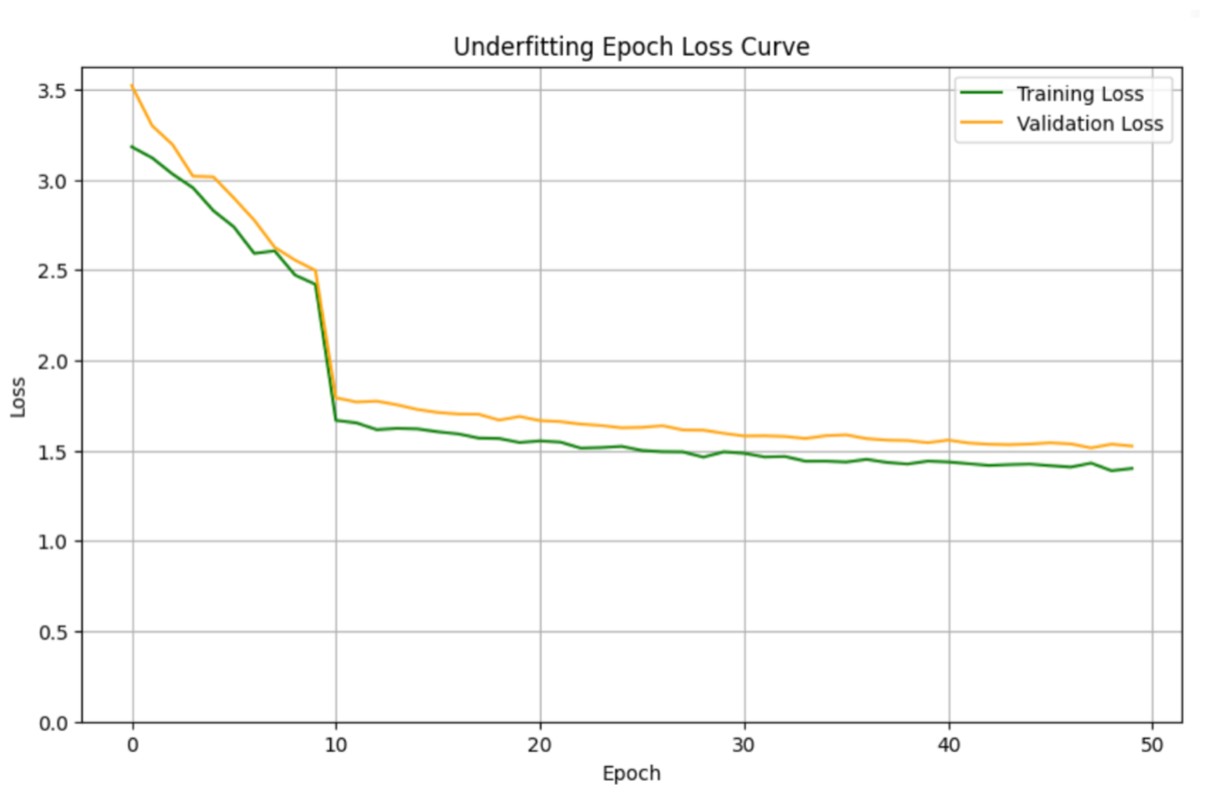

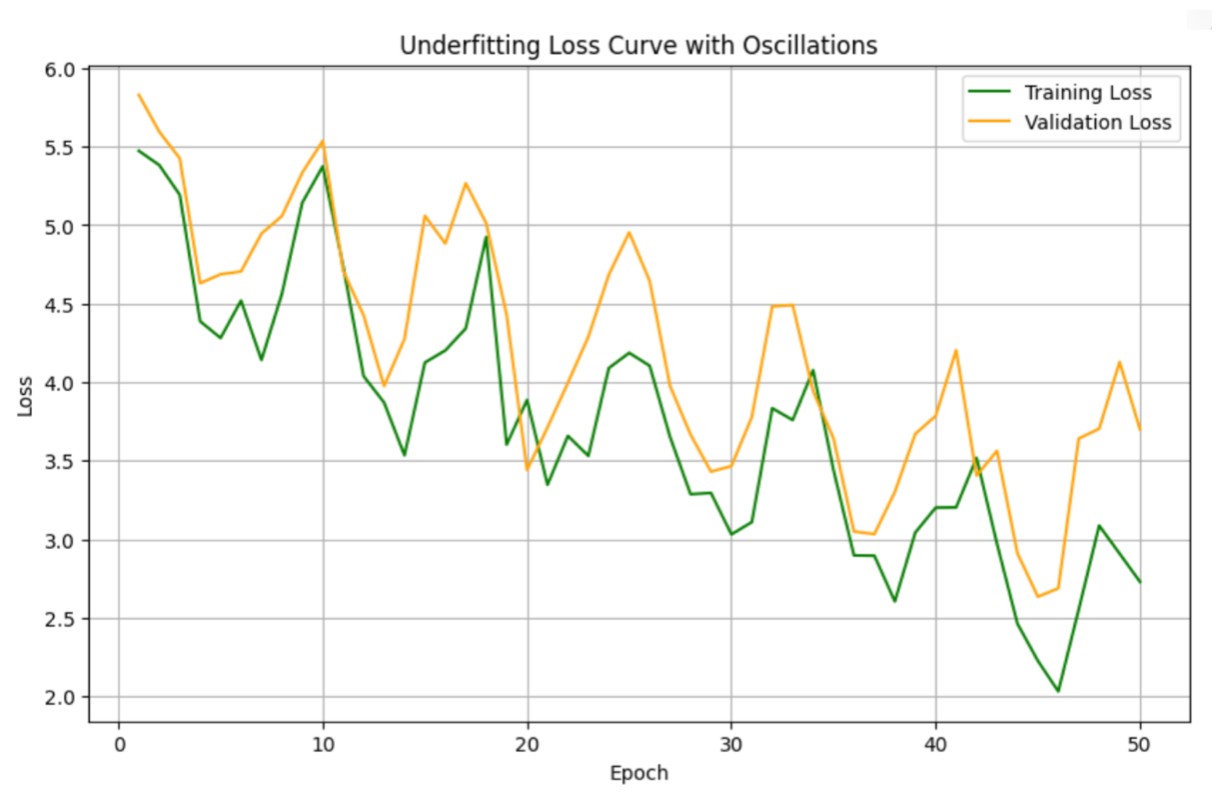

Loss Curve Behavior in Underfitting

- Training loss remains high

- Validation loss also remains high

- Loss curves may flatten early or oscillate without meaningful improvement

This indicates the model lacks capacity or has not learned enough.

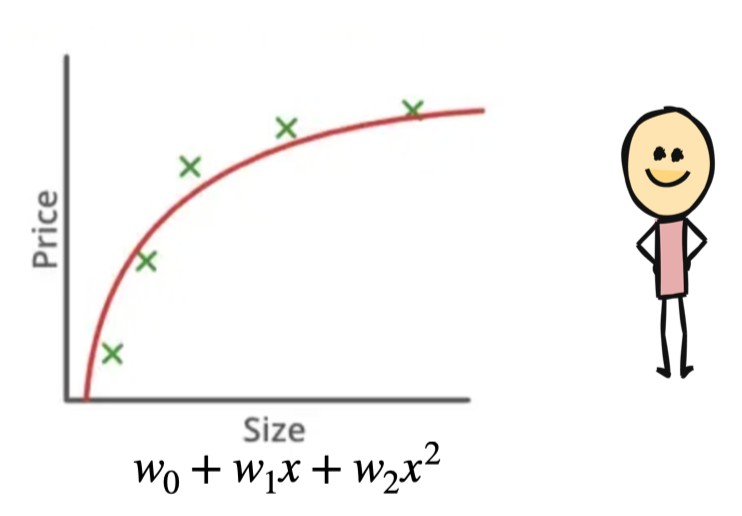

Proper (Good) Fitting

A properly fitted model captures the true trend in the data without memorizing noise.

- Performs well on both training and test data

- Balanced bias–variance tradeoff

- Low training error and low testing error

- Smooth decision boundary or function

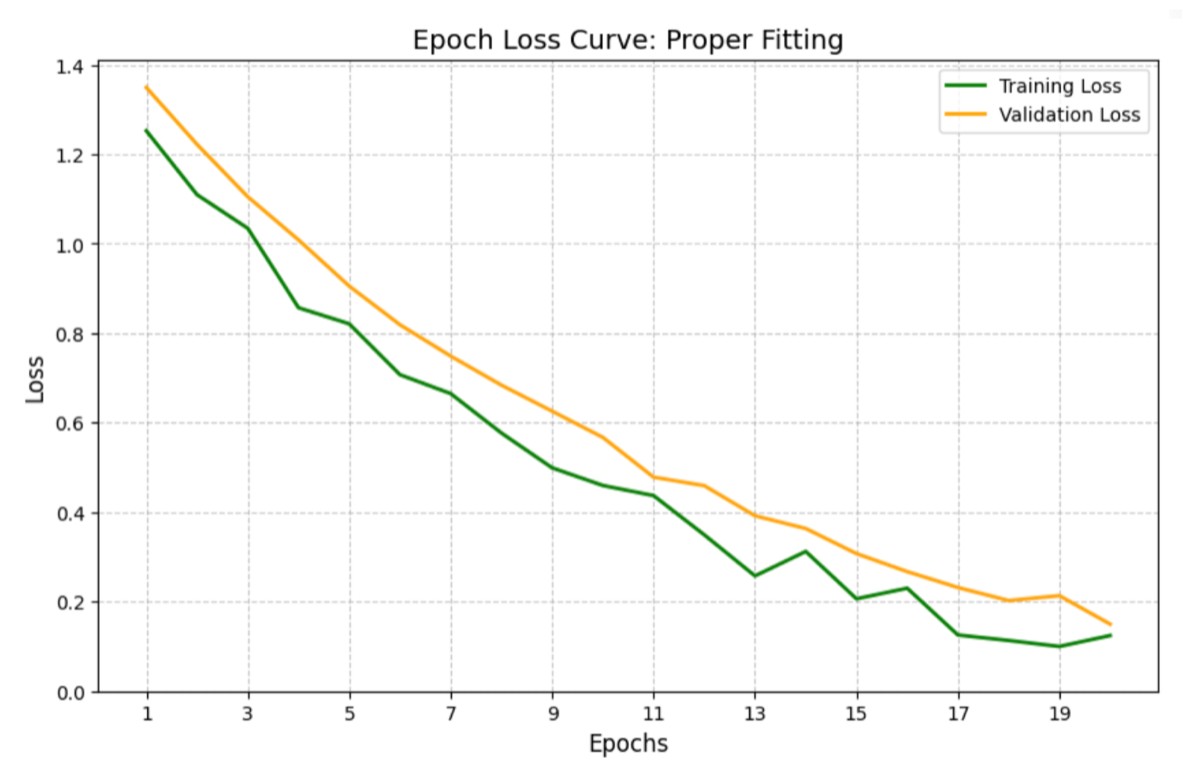

Loss Curve Behavior in Proper Fitting

The model follows the general pattern but does not pass through every data point.

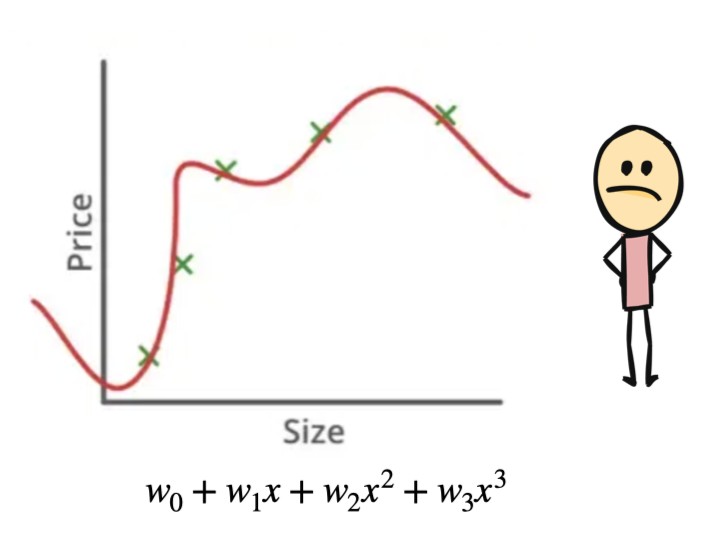

Overfitting

Overfitting happens when a model becomes too complex and starts memorizing the training data instead of learning general patterns.

- Excellent training accuracy

- Poor test accuracy

- Low bias, high variance

- Low training error, high testing error

The model fits noise and random fluctuations rather than the true signal.

Examples

- Deep decision trees

- Neural networks without regularization

- Models trained too long on small datasets

Loss Curve Behavior in Overfitting

- Training loss continuously decreases

- Validation loss decreases initially, then starts increasing

- Clear divergence between training and validation loss

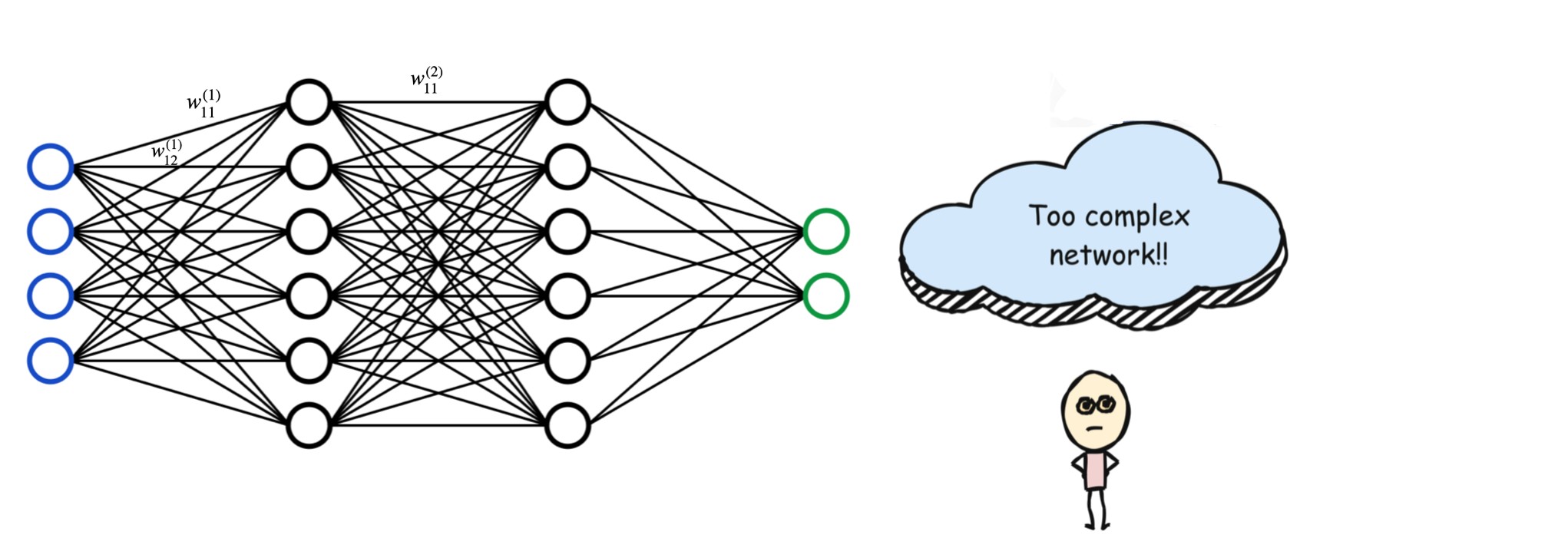

Why Overfitting Happens (Especially in Neural Networks)

- Using very complex models for simple problems

- Small datasets that do not represent the true data distribution

- Large number of parameters (weights and neurons)

- Training for too many epochs

Too complex network has lots of nodes & weights.The weights define the importance of the neurons in the network. Neural networks with many layers and weights try to capture every minor pattern, including noise, which harms generalization.

How to Reduce Overfitting

- Use regularization (L1, L2)

- Apply dropout

- Use early stopping

- Perform cross-validation

- Collect more data

- Simplify the model architecture

- Use data augmentation (especially in vision tasks)

How to Avoid Underfitting

- Increase model complexity

- Check if the model coding has errors

- Check if the model architecture is proper or we need more parameters

- Train for more epochs

- Reduce excessive regularization

- Add more relevant features

- Use better feature transformations

- Tune hyperparameters (learning rate, depth, etc.)

- Ensure data quality

Debugging Poor Test Performance

If Both Training and Test Performance Are Poor

- Check for underfitting

- Verify model implementation

- Increase model capacity

- Train longer

- Tune learning rate and batch size

If Training Is Good but Test Performance Is Poor

- Likely overfitting

- Apply regularization

- Ensure training and test data come from similar distributions

Key Takeaway

Underfitting means the model hasn’t learned enough.

Overfitting means the model has learned too much.

The goal is to learn just enough to generalize.

Achieving the right balance between bias and variance is central to building effective machine learning models.